How To Install R Sparklyr H2O Tensorflow Keras On Centos

Requirements:

- Conda Installed - Check out how to install Conda

- Python 3 Installed - Check out how to install Python3

- Python3 Virtual Env Created- Check out how to create Python3 virtual env

- Spark Installed- Check out how to install Spark

Assuming you have the above requirements fulfilled. Lets first make sure we have the latest epel installed.

Run following command.

sudo yum -y install epel-release

How to install R on Centos

Now we can install R using following command.

sudo yum -y install R

How to install R H2O library on Centos

Lets install machine learning package H2O using yum. Create a repo file /etc/yum.repos.d/h2o-rpm.repo using vim.

vi /etc/yum.repos.d/h2o-rpm.repo

Add following in the above file.

[bintray-h2o-rpm]

name=bintray-h2o-rpm

baseurl=https://dl.bintray.com/tatsushid/h2o-rpm/centos/$releasever/$basearch/

gpgcheck=0

repo_gpgcheck=0

enabled=1

Now we can install R related packages for H2O. Lets bring up the R repl. Type R on your bash or zsh cell.

R

Now run following commands to install H2O R packages.

if ("package:h2o" %in% search()) { detach("package:h2o", unload=TRUE) }

if ("h2o" %in% rownames(installed.packages())) { remove.packages("h2o") }

pkgs <- c("RCurl","jsonlite")

for (pkg in pkgs) {

if (! (pkg %in% rownames(installed.packages()))) { install.packages(pkg) }

}

install.packages("h2o", type="source", repos=(c("http://h2o-release.s3.amazonaws.com/h2o/latest_stable_R")))

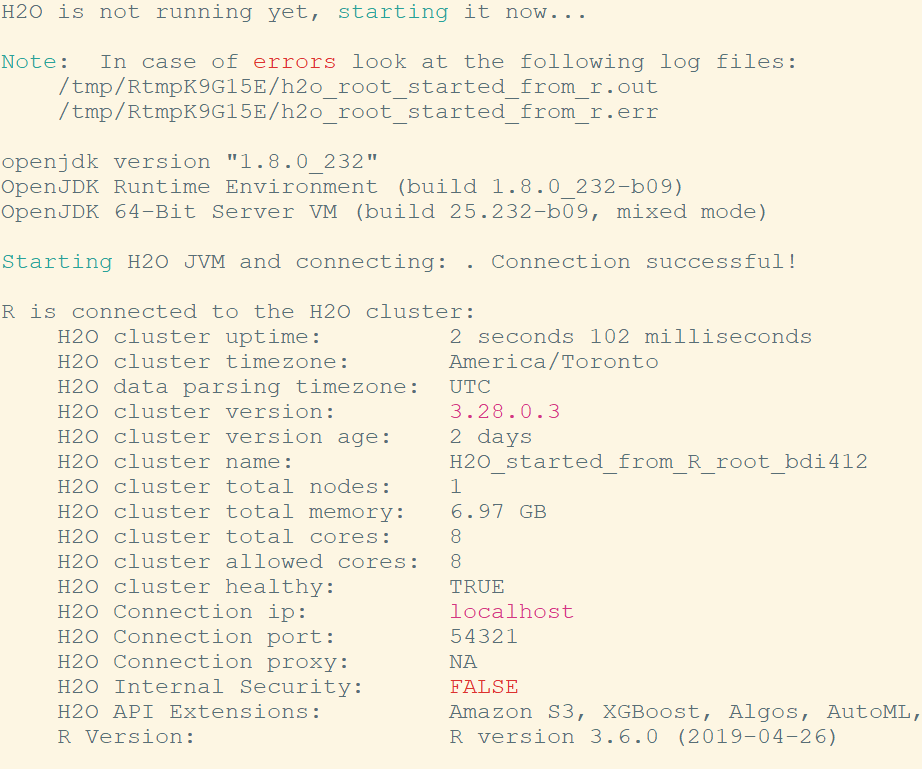

If you see the following output, it means H2o is installed successfully.

Run the following code to check if H2O is working fine in your R repl.

library(h2o) localH2O = h2o.init() demo(h2o.kmeans)

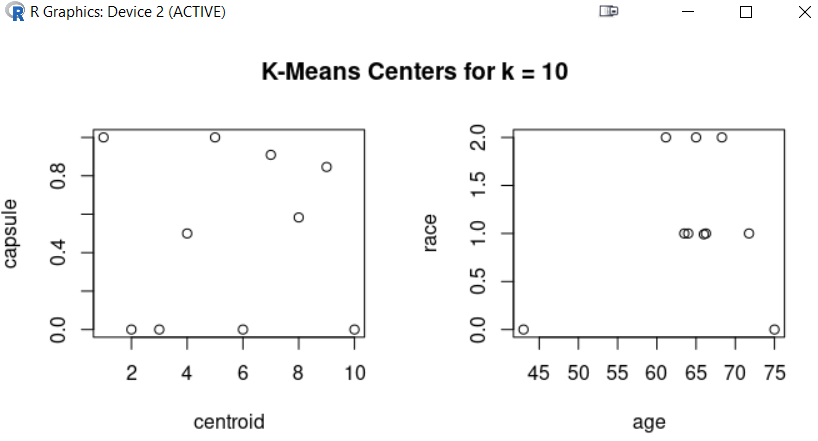

You should see a GUI like this.

Ok so far so good. Lets install Keras and Tensorflow now.

How to install R Keras and Tensorflow

In your R repl, run following command.

library(reticulate)

To install Keras, Tensforflow and all their dependencies, we will use py_install which comes with reticulate.

py_install('keras', envname='py37',method = c("auto", "virtualenv", "conda"))

envname='py37' - py37 is the python3 virtual environment I have on my machine. Replace it with your virtual env name.

Now you have Keras and Tensorflow both installed.

How to install Spark R package sparklyr

Install libcurl-devel package. Otherwise you might run in to following error.

Configuration failed because libcurl was not found.

In your bash shell, run following yum command.

sudo yum -y install libcurl-devel

Lets install R package sparklyr. In your R repl, run following command.

install.packages("sparklyr")

Let us test if Spark is working fine in R.

library(sparklyr)

sc <- spark_connect(master = "local")

If above commands get executed without any errors, then you are fine.

At this point, we are done. If you want to access R in python Jupyter notebook. Do the following steps.

How to access R in Jupyter notebook

In your R repl, do following...

install.packages('IRkernel')

IRkernel::installspec()

Now restart your Jupyter notebook, you should see R repl as one of your kernels and you should be able to access all the machine learning libraries that we installed from the Jupyter notebook.

Related Topics:

Related Notebooks

- How To Install Python TensorFlow On Centos 8

- How To Install Python With Conda

- How To Add Regression Line On Ggplot

- How To Write DataFrame To CSV In R

- How To Plot Histogram In R

- How To Use R Dplyr Package

- How To Use Grep In R

- How To Run Logistic Regression In R

- How To Analyze Yahoo Finance Data With R